Just another quick example of colouring Arabic glyphs, showing that you can selectively colour glyph sub-paths.

Category Archives: Unicode

Glyph chart for ScheherazadeRegOT

I’m in the middle of writing the first of a new series of articles on using the libotf C library to typeset fully vowelled Arabic. I hope to get the first article finished in the next week or two. In the meantime, here’s a glyph chart for the free OpenType Arabic typeface called Scheherazade, produced by, and available for download from, SIL International. Many thanks to them for providing this typeface, together with the Microsoft VOLT project files (contained in the developer package).

One way to compile GNU Fribidi as a static library (.lib) using Visual Studio

Introduction and caveat reader

Yesterday I spent about half an hour seeing if I could get GNU Fribidi C library (version 0.19.2) to build as a static library (.lib) under Windows, using Visual Studio. Well, I cheated a bit and used my MinGW/MSYS install (which I use to build LuaTeX) in order to create the config.h header. However, it built OK so I thought I’d share what I did; but do please be aware that I’ve not yet fully tested the .lib I built so use these notes with care. I merely provide them as a starting point.

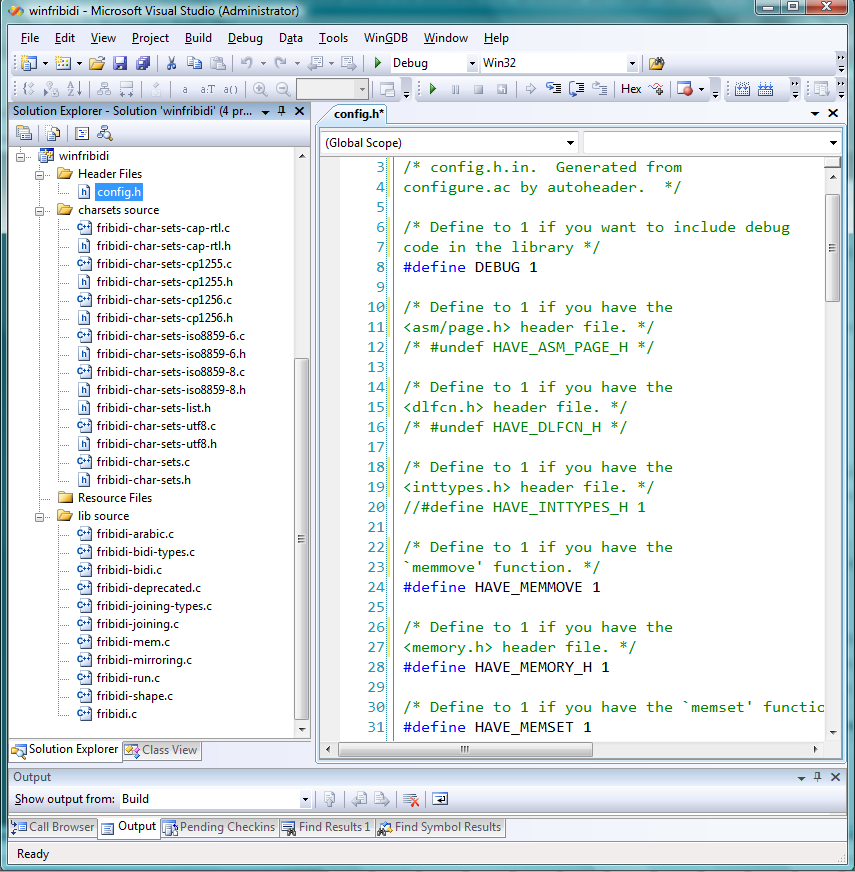

config.h

If you’ve ever used MinGW/MSYS or Linux build tools you’ll know that config.h is a header file created through the standard Linux-based build process. In essence, config.h sets a number of #defines based on your MinGW/MSYS build environment: you need to transfer the resulting config.h to include it within your Visual Studio project. However, the point to note is that the config.h generated by the MinGW/MSYS build process may create #defines which “switch on” certain headers etc that are “not available” to your Visual Studio setup. What I do is comment out a few of the config.h #defines to get a set that works. This is a bit kludgy, but to date it has usually worked out for me. If you don’t have MinGW/MSYS installed, you can download the config.h I generated and tweaked. Again, I make no guarantees it’ll work for you.

An important Preprocessor Definition

Within the Preprocessor Definitions options of your Visual Studio project you need to add one called HAVE_CONFIG_H which basically enables the use of config.h.

Two minor changes to the source code

Because I’m building a static library (.lib) I made two tiny edits to the source code. Again, there are better ways to do this properly. The change is to the definition of FRIBIDI_ENTRY. Within common.h and fribidi-common.h there are tests for WIN32 which end up setting:

#define FRIBIDI_ENTRY __declspec(dllexport)

For example, in common.h

...

#if (defined(WIN32)) || (defined(_WIN32_WCE))

#define FRIBIDI_ENTRY __declspec(dllexport)

#endif /* WIN32 */

...

I edited this to

#if (defined(WIN32)) || (defined(_WIN32_WCE))

#define FRIBIDI_ENTRY

#endif /* WIN32 */

i.e., remove the __declspec(dllexport). Similarly in fribidi-common.h.

One more setting

Within fribidi-config.h I ensured that the FRIBIDI_CHARSETS was set to 1:

#define FRIBIDI_CHARSETS 1

And finally

You simply need to create a new static library project and make sure that all the relevant include paths are set correctly and then try the edits and settings suggested above to see if they work for you. Here is a screenshot of my project showing the C code files I added to the project. The C files are included in the …\charset and …\lib folders of the C source distribution.

With the above steps the library built with just 2 level 4 compiler warnings (that is, after I had included the _CRT_SECURE_NO_WARNINGS directive to disable deprecation). I hope these notes are useful, but do please note that I have not thoroughly tested the resulting .lib file so please be sure that you perform your own due diligence.

Introduction to logical vs display order, and shaping engines

Introduction

In a previous post I promised to write a short introduction to libotf; however, before discussing libotf I need to “set the scene” and write something about logical vs display (visual) order and “shaping engines”. This post covers a lot of ground and I’ve tried to favour being brief rather than providing excessive detail: I hope I have not sacrificed accuracy in the process.

Logical order, display order and “shaping engines”

Logical order and display order

Imagine that you are tapping the screen of your iDevice, or sitting at your computer, writing an e-mail text in some language of your choice. Each time you enter a character it goes into the device’s memory and, of course, ultimately ends up on the display or stored in a file. The order in which characters are entered and stored by your device, or written to a text file, is called the logical order. For simple text written in left-to-right languages (e.g., English) this is, of course, the same order in which they are displayed: the display order (also called the visual order). However, for right-to-left languages, such as Arabic, the order in which the characters are displayed or rendered for reading is reversed: the display (visual) order is not the same as the logical order.

Arabic Unicode ranges

The Unicode 6.1 Standard allocates several ranges to Arabic (ignoring the latest Unicode 6.1 additions for Arabic maths). These are:

- Arabic

- Arabic Supplement

- Arabic Extended-A (new for Unicode 6.1)

- Arabic Presentation Forms-A

- Arabic Presentation Forms-B

The important point here is that for text storage and transfer, Arabic should be encoded/saved using the “base” Arabic Unicode range of 0600 to 06FF. (Caveat: I believe this is true but, of course, am not 100% certain. I’d be interested to know if this is indeed not the case.) However, I’ll assume this principle is broadly correct.

If you look at the charts you’ll see that the range 0600 to 06FF contains the isolated versions of each character; i.e., none of the glyph variations used for fully joined Arabic. So, looking at this from, say, LuaTeX’s viewpoint, how is it that the series of isolated forms of Arabic characters sitting in a TeX file in logical order can be transformed into typeset Arabic in proper visual display order? The answer is that the incoming stream of bytes representing Arabic text has to be “transformed” or “shaped” into the correct series of glyphs.

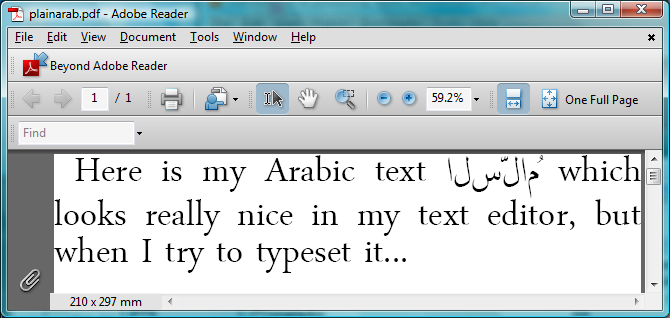

A little experiment

Just for the moment, assume that you are using the LuaTeX engine but with the plain TeX macro package: you have no other “packages” loaded but you have setup a font which has Arabic glyphs. All the font will do is allow you to display the characters that LuaTeX sees within its input stream. If you enter some Arabic text into a TeX file, what do you think will happen? Without any additional help or support to process the incoming Arabic text LuaTeX will simply output what is gets: a series of isolated glyphs in logical (not display) order:

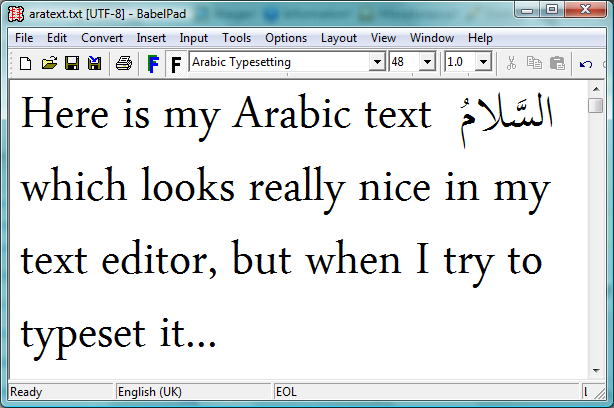

But it looked perfect in my text editor…:

Shaping engines

What’s happening is that the text editor itself is applying a shaping engine to the Arabic text in order to render it according to the rules of Arabic text processing and typography: you can think of this as a layer of software which sits between the underlying text and the screen display: transforming characters to glyphs. The key point is that the core LuaTeX engine does not provide a “shaping engine”: i.e., it does not have an automatic or built-in set of functions to automatically apply the rules of Arabic typesetting and typography. The LuaTeX engine needs some help and those rules have to be provided or implemented through packages, plug-ins or through Lua code such as the ConTeXt package provides. Without this additional help LuaTeX will simply render the raw, logical order, text stream of isolated (non-joined) characters.

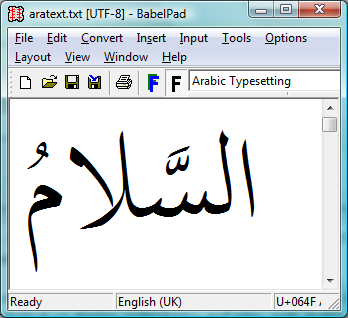

Incidentally, the text editor here is BabelPad which uses the Windows shaping engine called Uniscribe, which provides a set of “operating system services” for rendering complex scripts.

Contextual analysis

Because Arabic is a cursive script, with letters that change shape according to position in a word and adjacent characters (the “context”), part of the job of a “shaping engine” is to perform “contextual analysis”: looking at each character and working out which glyph should be used, i.e., the form it should take: initial, medial, final or isolated. The Unicode standard (Chapter 8: Middle Eastern Scripts ) explores this process in much more detail.

If you look the Unicode code charts for Arabic Presentation Forms-B you’ll see that this Unicode range contains the joining forms of Arabic characters; and one way to perform contextual analysis involves mapping input characters (i.e., in their isolated form) to the appropriate joining form version from within the Unicode range Arabic Presentation Forms-B. After the contextual analysis is complete the next step is to apply an additional layer of shaping to ensure that certain ligatures are produced (such as lam alef). In addition, you then apply more advanced typographic features defined within the particular (OpenType) font you are using: such as accurate vowel placement, cursive positioning and so forth. This latter stage is often referred to as OpenType shaping.

The key point is that OpenType font technology is designed to encapsulate advanced typographic rules within the font files themselves, using so-called OpenType tables: creating so-called “intelligent fonts”. You can think of these tables as containing an extensive set of “rules” which can be applied to a series of input glyphs in order to achieve a specific typographic objective. This is actually a fairly large and complex topic, which I’ll cover in a future post (“features” and “lookups”).

Despite OpenType font technology supporting advanced typography, you should note that the creators of specific fonts choose which features they want to support: some fonts are packed with advanced features, others may contain just a few basic rules. In short, OpenType fonts vary enormously so you should never assume “all fonts are created equal”, they are not.

And finally: libotf

The service provided by libotf is to apply the rules contained in an OpenType font: it is an OpenType shaping library, especially useful with complex text/scripts. You pass it a Unicode string and call various functions to “drive” the application of the OpenType tables contained within the font. Of course, your font needs to support or provide the feature you are asking for but libotf has a way to “ask” the font “Do you support this feature?” I’ll provide further information in a future post, together with some sample C code.

And finally, some screenshots

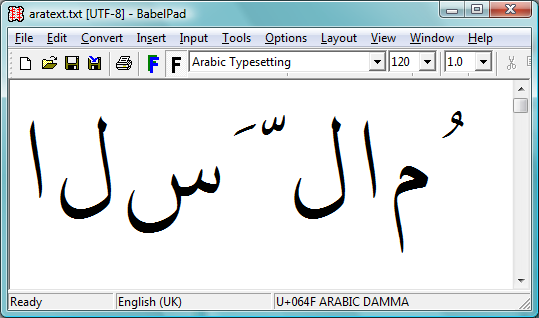

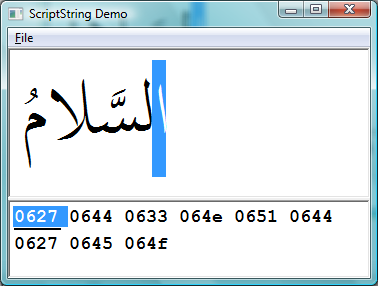

We’ve covered a lot of ground so, hopefully, the following screenshots might help to clarify the ideas presented above. These screenshots are also from BabelPad which has the ability to switch off the shaping engine to let you see the raw characters in logical order before any contextual analysis or shaping is applied.

The first screenshot shows the raw text in logical order before any shaping has been applied. This is the text that would be entered at the keyboard and saved into a TeX file. It is the sequence of input characters that LuaTeX would see if it read some TeX input containing Arabic text.

The following screenshot is the result of applying the Windows system shaping engine called Uniscribe. Of course, different operating systems have their own system services to perform complex shaping but the tasks are essentially the same: process the input text to render the display version, through the application of rules contained in the OpenType font.

One more screenshot, this time from a great little tool that I found on this excellent site.

The top section of the screenshot shows the fully shaped (i.e., via Uniscribe) and rendered version of the Unicode text shown in the lower part of the screen. Note carefully that the text stream is in logical order (see the highlighted character) and that the text is stored using the Unicode range 0600 to 06FF.

Summary

These posts are a lot of work and take quite some hours to write; so I hope that the above has provided a useful, albeit brief, overview of some important topics which are central to rendering/displaying or typesetting complex scripts.

Typesetting Arabic with LuaTeX [via a C plug-in] (Part 1)

Introduction

In this new series of posts I’m going to attempt an overview of the topics, concepts, ideas and technologies involved in typesetting Arabic with LuaTeX, via a DLL I’m writing in C. Actually, the C code is very substantially platform-independent so it should compile on non-Windows machines… one day, when it’s “finished”…

Up until 2 years ago I was teaching myself Arabic (see my Amazon book reviews) and had reached the point where I wanted to write-up my notes and worked exercises: I needed to typeset Arabic and wanted to use a TeX-based solution. Having looked around I stumbled upon some truly amazing video presentations of Arabic typesetting work being undertaken by Idris Hamid and Hans Hagen, using a tool called LuaTeX: something I’d never heard of. I was truly stunned by what I saw, the quality of their Arabic typesetting was (is) incredible, so I had to find out more. A few hours later I’d worked out that the typesetting was being achieved through Hans Hagen’s ConTeXt package, with LuaTeX as the underlying TeX engine. However, I’m personally not a user of ConTeXt, but the LuaTeX engine was just so interesting that I had to explore it. Well, two years later and I’ve not done any further learning of Arabic, having replaced that activity with plenty of explorations into LuaTeX and a host of other technologies, particularly OpenType and Unicode.

Coming up to the present day, I’ve finally reached the point where I have puzzled out enough detail of the “big picture” to attempt a home-grown Arabic typesetting solution for LuaTeX, but one where most of the “heavy lifting” is done in C, with Lua code to interface with and talk to LuaTeX. For sure, there are ready-made options such as XeTeX or the range of Arabic typesetting solutions created by the TeX community. However, my interest is creating a solution that will just as easily output SVG or other non-PDF formats, plus allow the automated production of new and novel “typeset structures” and diagrams that will really help with learning Arabic: things I wish had been present in the many books I have bought and studied but which may just be too time-consuming, or difficult/expensive, to produce by “conventional” applications. These are big goals, but definitely achievable, albeit over a year or two of further work.

Sample

Just by way of an early example, see the following PDF, as usual, through the Google Docs viewer or download PDF here. The trained eye will certainly spot a few issues that need fixing but so far it’s not looking too bad :-). But there is a long, long way to go yet. The font used is Microsoft’s “Arabic Typesetting” because it is contains a substantial number of OpenType features including cursive positioning, mark-to-base positioning, an enormous range of ligatures plus many other features which make it an ideal choice of font to work with (in my opinion). In the example (the made-up words) you can see the non-horizontal baseline achieved with cursive positioning plus the ability to control vowel placement with great flexibility.

But it’s still far from perfect, I’ll readily admit. I hope I can finish this work, and find the time to complete these articles. I’ll certainly try!

Excellent tutorial on Uniscribe

If you are interested in complex script typography and, in particular, exploring the Windows Uniscribe engine then this series of tutorials will be invaluable and save you a lot of time. Also provided is the C source code of Neatpad, Unicode-aware text editor which uses Uniscribe. A real gem!