Notes and comments are inline with the C code

A fairly basic example to explain a bit about libotf: just to “get started”. To run this, I built libotf (and FreeType) as static libraries and linked against them.

#include <windows.h>

#include <math.h>

#include <malloc.h>

#include <memory.h>

#include <stdio.h>

#include <stdlib.h>

// I'm including FreeType #includes directly not via #include FT_FREETYPE_H

#include <ft2build.h>

#include <freetype.h>

#include <t1tables.h>

#include <ftoutln.h>

#include <ftbbox.h>

//#include FT_FREETYPE_H

#include <otf.h>

//#include <pcre.h>

//#include <time.h>

typedef unsigned char uint8_t;

typedef unsigned int uint32_t;

int main(int argc, char** argv)

{

FT_Library font_library;

FT_Face fontface;

FT_GlyphSlot cur_glyph;

FT_Glyph_Metrics glyph_metrics;

OTF_GlyphString gstring;

char * fontpath;

size_t numcodepoints;

OTF *otf;

int i;

// "arabictext" is a "wide character" string. It contains a sequence of Unicode codepoints

// for each character in our string. BUT NOTE: these codepoints will be the values of the

// UNSHAPED isolated Arabic characters. What you are looking at on screen here is the result of

// applying the operating system/browser shaping engine to shape the displayed version.

// It is really important to understand that !!

wchar_t * arabictext = L"حَرَكَات";

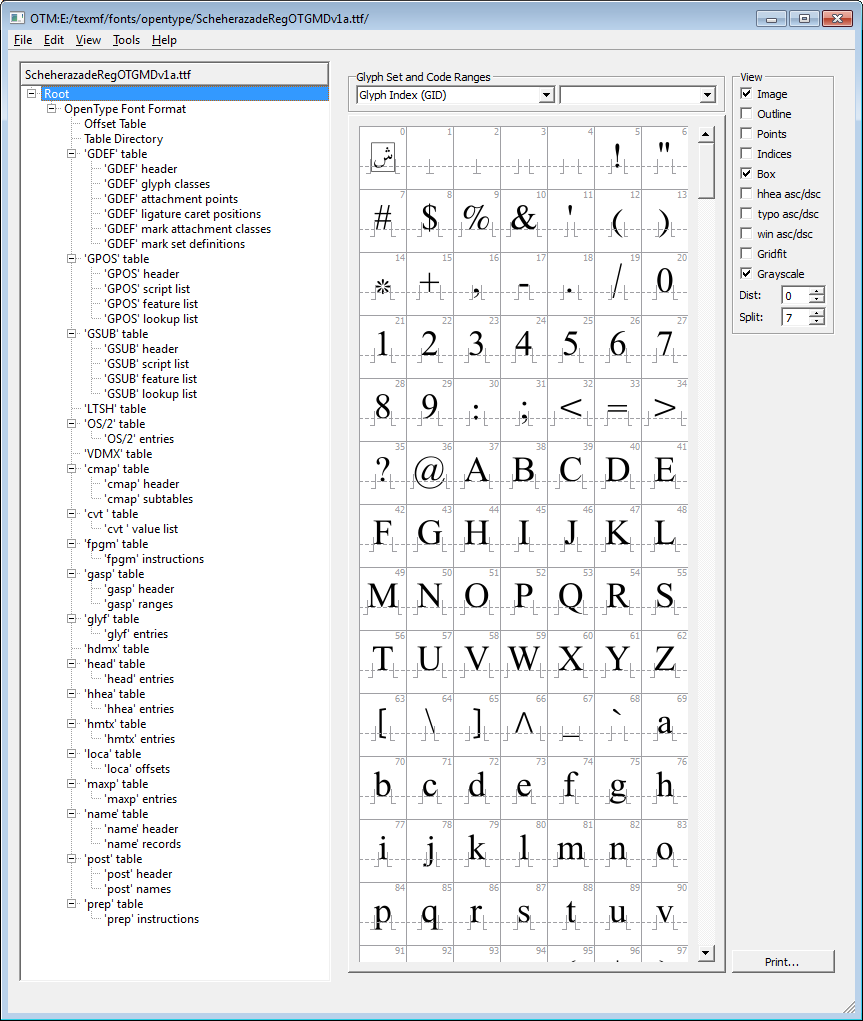

// I'm using the Scheherazade font from SIL (as amended by me)

fontpath="e:\\Volt\\ScheherazadeRegOT-1.005-developer\\sources\\ScheherarazadeGDversion3.ttf";

// wcslen returns the string length in "wide character" units

// i.e., this gives you the number of Unicode codepoints (i.e., characters).

// Obviously, if "arabictext" was encoded in UTF-8 (e.g., we read it from a file)

// we'd need to counts the number of codepoints by converting the UTF-8

// back into Unicode character integers (codepoints)

numcodepoints= wcslen(arabictext);

// gstring is the object we pass to the OTF library.

// First we need to tell it how long our gstring is.

// Initially, gstring.used = gstring.size until the libotf library starts to

// manipulate the gstring (glyph sequences) and perform various OpenType

// features/lookups (e.g., GSUB subsitutions) which usually results in

// changes to the number of glyphs present in the string.

// OK, here's where we set up the gstring for use with the OTF library

gstring.used=numcodepoints;

gstring.size=numcodepoints;

// Now we need to create our actual glyph objects

// 1 for each codepoint in our text wchar_t * arabictext

gstring.glyphs= malloc (sizeof (OTF_Glyph) * numcodepoints);

memset (gstring.glyphs, '\0', sizeof (OTF_Glyph) * numcodepoints);

// Now we are ready to use the OTF library. I should make it VERY clear

// that here we are NOT, I repeat NOT doing any shaping of the Arabic

// text. libotf does not transform the string of isolated Arabic glyphs form into their

// initial, medial or final shapes. That must happen BEFORE you pass the

// gstring to libotf. The following is just a trivial demo showing the basics.

// Firstly, we need to assign the Unicode codepoint (character value)

// to each of the glyphs in our gstring object --- setting gstring.glyphs[i].c for glyph i.

// (as contained in arabictext[i])

for (i=0; i < numcodepoints; i++) {

gstring.glyphs[i].c = arabictext[i];

}

// Get our instance of the libotf library

// You should check the return value: Warning, I'm being VERY lazy here!!!

otf = OTF_open(fontpath);

// Now we'll call the really interesting functions.

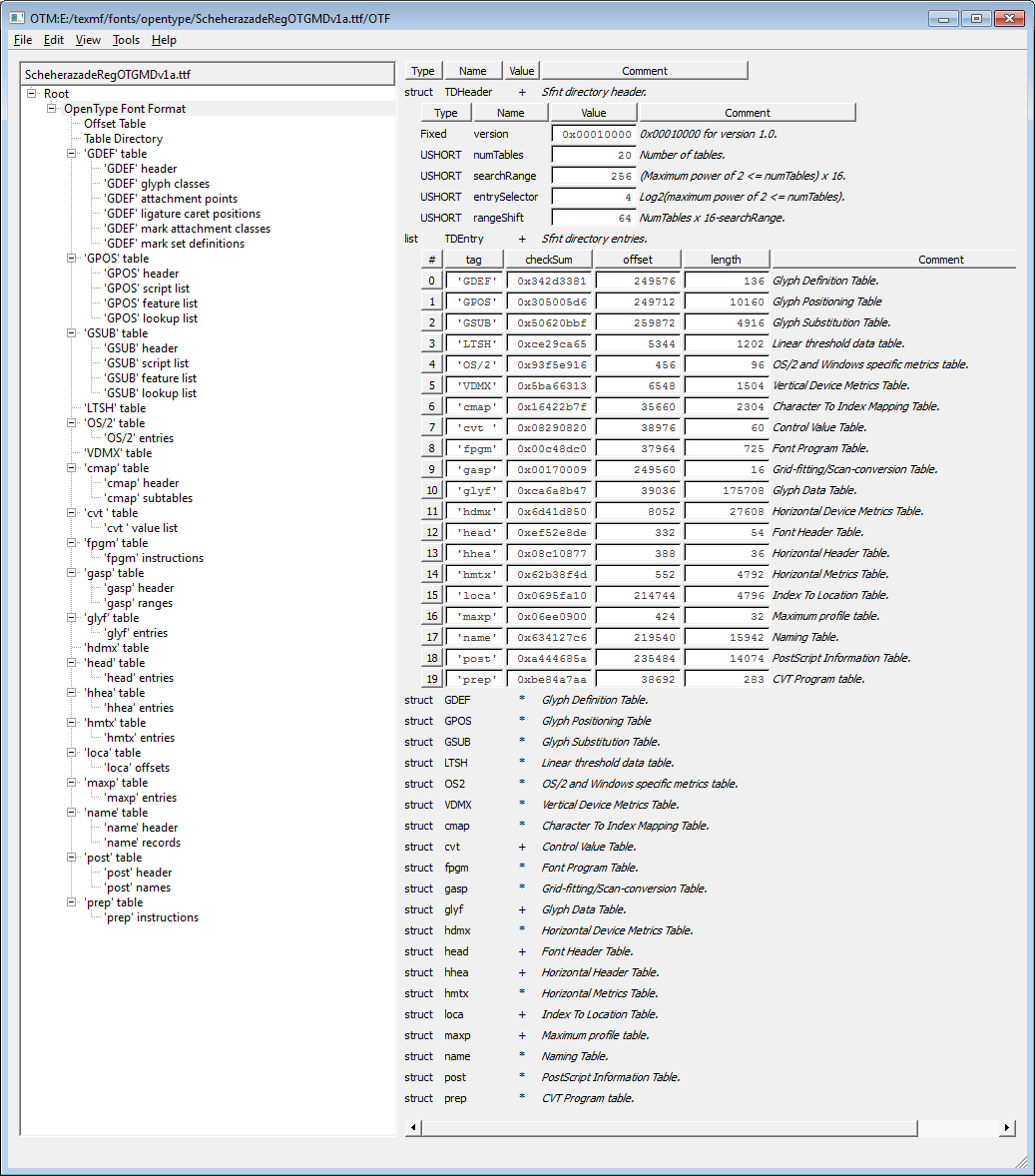

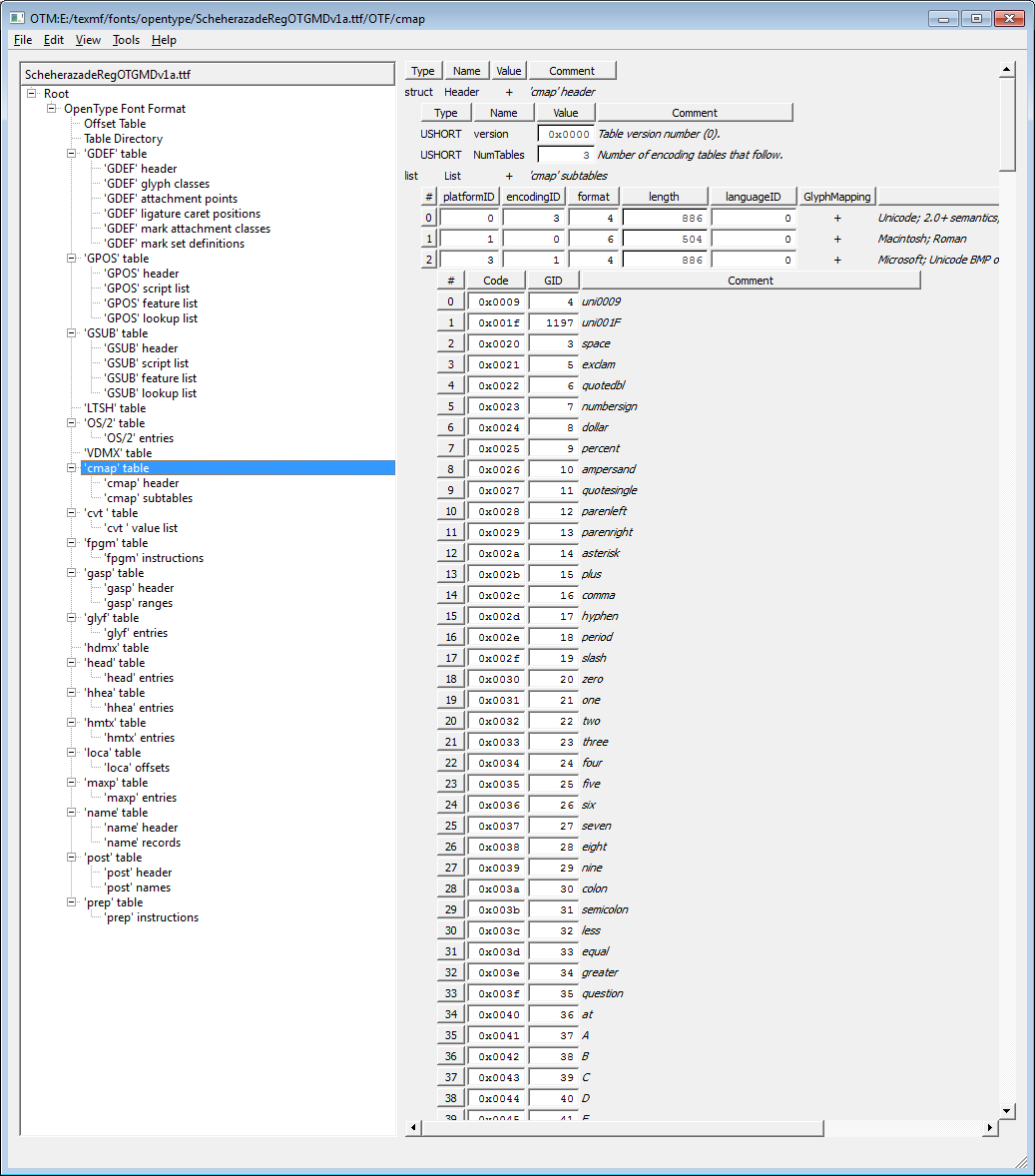

// Firstly, we'll call OTF_drive_cmap2 (otf, gstring, 3, 1)

// to assign GLYPH IDENTIFIERS to our gstring. What's happening is that libotf is

// using the CMAP table in the font to say "Hey, I've got the Unicode code point X

// can you tell me the GLYPH IDENTIFIER that maps to in the font?

OTF_drive_cmap2 (otf, &gstring, 3, 1);

// OK, so what's the result of this? Let's see:

for (i=0; i < numcodepoints; i++) {

printf("Unicode character %ld maps to GLYPH IDENTIFIER %ld \n", gstring.glyphs[i].c, gstring.glyphs[i].glyph_id);

}

//The output is:

/*

Unicode character 1581 maps to GLYPH IDENTIFIER 340

Unicode character 1614 maps to GLYPH IDENTIFIER 907

Unicode character 1585 maps to GLYPH IDENTIFIER 290

Unicode character 1614 maps to GLYPH IDENTIFIER 907

Unicode character 1603 maps to GLYPH IDENTIFIER 395

Unicode character 1614 maps to GLYPH IDENTIFIER 907

Unicode character 1575 maps to GLYPH IDENTIFIER 257

Unicode character 1578 maps to GLYPH IDENTIFIER 322

*/

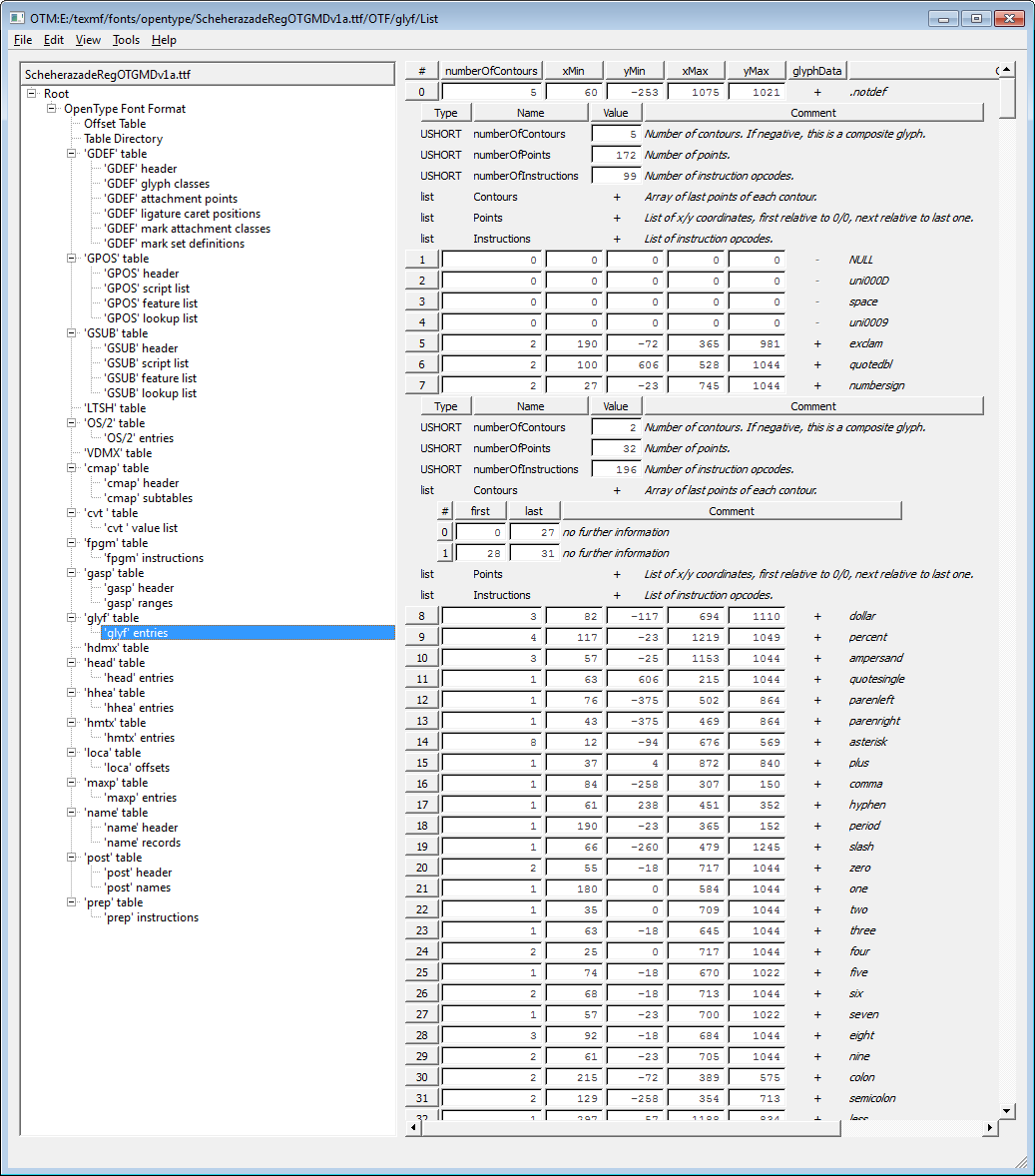

// Next, we'll call OTF_drive_gdef (otf, gstring) whose job it is

// to tell us what TYPE of glyph (called the Glyph Class) are we dealing with. This is the OpenType

// GDEF table which can be used to allocate an identifier (Glyph Class) to each glyph

// in the font.

// See http://partners.adobe.com/public/developer/opentype/index_table_formats5.html

// Glyph Class 1 = Base glyph (single character, spacing glyph)

// Glyph Class 2 = Ligature glyph (multiple character, spacing glyph)

// Glyph Class 3 = Mark glyph (non-spacing combining glyph)

// Glyph Class 4 = Component glyph (part of single character, spacing glyph)

OTF_drive_gdef (otf, &gstring);

// Let's see what we got from that:

for (i=0; i < numcodepoints; i++) {

printf("Unicode character %ld maps to GLYPH IDENTIFIER %ld which is Glyph Class %ld\n", gstring.glyphs[i].c, gstring.glyphs[i].glyph_id, gstring.glyphs[i].GlyphClass);

}

/*

Unicode character 1581 maps to GLYPH IDENTIFIER 340 which is Glyph Class 1

Unicode character 1614 maps to GLYPH IDENTIFIER 907 which is Glyph Class 3

Unicode character 1585 maps to GLYPH IDENTIFIER 290 which is Glyph Class 1

Unicode character 1614 maps to GLYPH IDENTIFIER 907 which is Glyph Class 3

Unicode character 1603 maps to GLYPH IDENTIFIER 395 which is Glyph Class 1

Unicode character 1614 maps to GLYPH IDENTIFIER 907 which is Glyph Class 3

Unicode character 1575 maps to GLYPH IDENTIFIER 257 which is Glyph Class 1

Unicode character 1578 maps to GLYPH IDENTIFIER 322 which is Glyph Class 1

*/

// OK, that's the end. Time to get out of here.

// Let's be tidy!

free(gstring.glyphs);

OTF_close (otf);

return 0;

}